Hardware

We mainly use four pieces of hardware:

1) Baxter robot with its left hand gripper and right hand camera,

2) Wooden cubes

3) Cardboard to build the map

4) Usb camera + tripod.

In the beginning, we decided to make a 5X5 map using 3-inch cubes. But due to the limitation of the reach of Baxter's arm, we were forced to make a 4X4 map with 2-inch cubes. We used cardboard to build the map, 9 cubes to represent walls and boxes, and 3 small pieces of cardboard to represent 2 destinations and a start point.

We first planned to use the right hand camera to detect the whole map, but were soon disappointed when we found out that the image quality of right hand camera is not good enough to capture all the AR Tags, let alone even one or two. So we decided to use a usb camera which has a better quality and stability in detecting AR Tags. We also use a tripod to hold the usb camera.

1) Baxter robot with its left hand gripper and right hand camera,

2) Wooden cubes

3) Cardboard to build the map

4) Usb camera + tripod.

In the beginning, we decided to make a 5X5 map using 3-inch cubes. But due to the limitation of the reach of Baxter's arm, we were forced to make a 4X4 map with 2-inch cubes. We used cardboard to build the map, 9 cubes to represent walls and boxes, and 3 small pieces of cardboard to represent 2 destinations and a start point.

We first planned to use the right hand camera to detect the whole map, but were soon disappointed when we found out that the image quality of right hand camera is not good enough to capture all the AR Tags, let alone even one or two. So we decided to use a usb camera which has a better quality and stability in detecting AR Tags. We also use a tripod to hold the usb camera.

Software

|

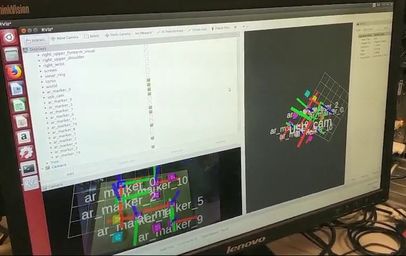

Step 1 : Use usb camera to read the map

roslaunch "webcam_track.launch" in directory "project_camera/src/ar_track_alvar/launch" : let the usb camera detect AR Tags. rosrun rviz rviz : Visualize the map and adjust the camera to detect the whole map. run "listener.py" in "project/src/control/src/others" : subscribe the position of AR Tags from topic \tf and save it in map.txt (also in this directory). Step 2 : Open the right hand camera of Baxter and detect the origin roslaunch "webcam_track.launch" in directory "project/src/ar_track_alvar/launch" : let the right hand camera of baxter detect AR Tags. rosrun rviz rviz : Visualize the detection and adjust the right hand camera to detect the origin of the map. Step3 : Let Baxter play rosrun baxter_interface joint_trajectory_action_server.py : Run Baxter's joint trajectory controller roslaunch baxter_moveit_config baxter_grippers.launch : Start path planning. run "iK_example.py" in "project/src/control/src/others" : Play the game. |

System Functional Description

1.USB Camera visualizes the grid of AR tags and maps their positions relative to the origin of map, then transforms them to positions relative to the robot's base which used for Inverse Kinematics.

2.For the automatic mode: Searching algorithm reads the map into 2-D array, and returns a list of paths to feed into the move function to solve the Sokoban puzzle.

3.Adjust the right hand camera of Baxter to detect the AR tag represented as the origin of map.

4.Baxter's left hand first moves to the start point set in the map.

5.Baxter follows paths returned from step 2, and grips each box to solve the puzzle.

2.For the automatic mode: Searching algorithm reads the map into 2-D array, and returns a list of paths to feed into the move function to solve the Sokoban puzzle.

3.Adjust the right hand camera of Baxter to detect the AR tag represented as the origin of map.

4.Baxter's left hand first moves to the start point set in the map.

5.Baxter follows paths returned from step 2, and grips each box to solve the puzzle.